Attention-Guided Dynamic Model Selection for Single Image Super-Resolution Using Deep Ensemble Learning

Keywords:

Super-resolution; Single Image Super-Resolution; attention mechanism; ensemble deep learning; model selection; image reconstructionAbstract

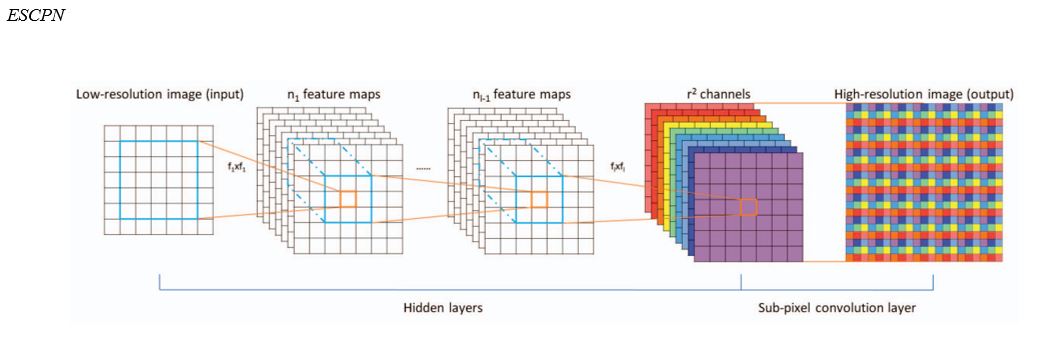

The rapid growth of digital imaging technologies has made high-quality visual data increasingly accessible; however, the storage, transmission, and restoration of high-resolution images remain challenging in bandwidth-limited and resource-constrained environments. Although compression methods reduce file size, they may remove critical details required for scientific, medical, remote-sensing, and security applications. To address this limitation, this study proposes an attention-guided dynamic ensemble framework for Single Image Super-Resolution (SISR). The proposed method integrates several representative super-resolution models, including LapSRN, SRResNet, ResNeXt-based SR, SRCNN/FSRCNN, and ESPCN, and uses an attention-guided selection module to assign the most suitable model to different image regions based on local characteristics such as edges, textures, and smooth areas. The selected outputs are then fused by a convolutional integration network to generate the final high-resolution image. Experiments on DIV2K and BSDS300 show that the proposed method improves reconstruction quality, particularly in terms of structural similarity and texture preservation. On DIV2K, the proposed method achieved 33.40 dB PSNR and 0.9172 SSIM; on BSDS300, it achieved 28.13 dB PSNR and 0.8497 SSIM. These findings indicate that dynamic model selection can reduce the limitations of individual super-resolution models and improve detail recovery in feature-diverse images.

Downloads

References

Agustsson, E., & Timofte, R. (2017). NTIRE 2017 Challenge on Single Image Super-Resolution: Dataset and study. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops,

Denton, E. L., Chintala, S., Szlam, A., & Fergus, R. (2015). Deep generative image models using a Laplacian pyramid of adversarial networks. Advances in Neural Information Processing Systems,

Ding, K., Ma, K., Wang, S., & Simoncelli, E. P. (2021). Comparison of full-reference image quality models for optimization of image processing systems. International Journal of Computer Vision, 129, 1258-1281. https://doi.org/10.1007/s11263-020-01419-7

Dong, C., Loy, C. C., He, K., & Tang, X. (2016). Image super-resolution using deep convolutional networks. IEEE Transactions on Pattern Analysis and Machine Intelligence, 38(2), 295-307. https://doi.org/10.1109/TPAMI.2015.2439281

Dong, C., Loy, C. C., & Tang, X. (2016). Accelerating the super-resolution convolutional neural network. European Conference on Computer Vision,

Freeman, W. T., Jones, T. R., & Pasztor, E. C. (2002). Example-based super-resolution. IEEE Computer Graphics and Applications, 22(2), 56-65. https://doi.org/10.1109/38.988747

Glasner, D., Bagon, S., & Irani, M. (2009). Super-resolution from a single image. Proceedings of the IEEE International Conference on Computer Vision,

Haris, M., Shakhnarovich, G., & Ukita, N. (2018). Deep back-projection networks for super-resolution. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep residual learning for image recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Huang, G., Liu, Z., Van Der Maaten, L., & Weinberger, K. Q. (2017). Densely connected convolutional networks. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Keys, R. (1981). Cubic convolution interpolation for digital image processing. IEEE Transactions on Acoustics, Speech, and Signal Processing, 29(6), 1153-1160. https://doi.org/10.1109/TASSP.1981.1163711

Kim, J., Lee, J. K., & Lee, K. M. (2016a). Accurate image super-resolution using very deep convolutional networks. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Kim, J., Lee, J. K., & Lee, K. M. (2016b). Deeply-recursive convolutional network for image super-resolution. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Lai, W. S., Huang, J. B., Ahuja, N., & Yang, M. H. (2017). Deep Laplacian pyramid networks for fast and accurate super-resolution. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Ledig, C., Theis, L., Huszar, F., Caballero, J., Cunningham, A., Acosta, A., Aitken, A., Tejani, A., Totz, J., Wang, Z., & Shi, W. (2017). Photo-realistic single image super-resolution using a generative adversarial network. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Liang, J., Cao, J., Sun, G., Zhang, K., Van Gool, L., & Timofte, R. (2021). SwinIR: Image restoration using Swin Transformer. Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops,

Martin, D., Fowlkes, C., Tal, D., & Malik, J. (2001). A database of human segmented natural images and its application to evaluating segmentation algorithms and measuring ecological statistics. Proceedings of the IEEE International Conference on Computer Vision,

Mei, Y., Fan, Y., & Zhou, Y. (2021). Image super-resolution with non-local sparse attention. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition,

Shi, W., Caballero, J., Huszar, F., Totz, J., Aitken, A. P., Bishop, R., Rueckert, D., & Wang, Z. (2016). Real-time single image and video super-resolution using an efficient sub-pixel convolutional neural network. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,

Timofte, R., De Smet, V., & Van Gool, L. (2014). A+: Adjusted anchored neighborhood regression for fast super-resolution. Asian Conference on Computer Vision,

Tong, T., Li, G., Liu, X., & Gao, Q. (2017). Image super-resolution using dense skip connections. Proceedings of the IEEE International Conference on Computer Vision,

Wang, X., Yu, K., Wu, S., Gu, J., Liu, Y., Dong, C., Loy, C. C., Qiao, Y., & Tang, X. (2018). ESRGAN: Enhanced super-resolution generative adversarial networks. European Conference on Computer Vision Workshops,

Wang, Z., Bovik, A. C., Sheikh, H. R., & Simoncelli, E. P. (2004). Image quality assessment: From error visibility to structural similarity. IEEE Transactions on Image Processing, 13(4), 600-612.

Wang, Z., Chen, J., & Hoi, S. C. H. (2021). Deep learning for image super-resolution: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence, 43(10), 3365-3387. https://doi.org/10.1109/TPAMI.2020.2982166

Yang, J., Wright, J., Huang, T. S., & Ma, Y. (2010). Image super-resolution via sparse representation. IEEE Transactions on Image Processing, 19(11), 2861-2873. https://doi.org/10.1109/TIP.2010.2050625

Yue, L., Shen, H., Li, J., Yuan, Q., Zhang, H., & Zhang, L. (2016). Image super-resolution: The techniques, applications, and future. Signal Processing, 128, 389-408. https://doi.org/10.1016/j.sigpro.2016.05.002

Zhang, Y., Li, K., Li, K., Wang, L., Zhong, B., & Fu, Y. (2018). Image super-resolution using very deep residual channel attention networks. European Conference on Computer Vision,

Zhang, Y., Tian, Y., Kong, Y., Zhong, B., & Fu, Y. (2018). Residual dense network for image super-resolution. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,